Case 02 · Amazon Robotics

Autonomous fleet monitoring improvements.

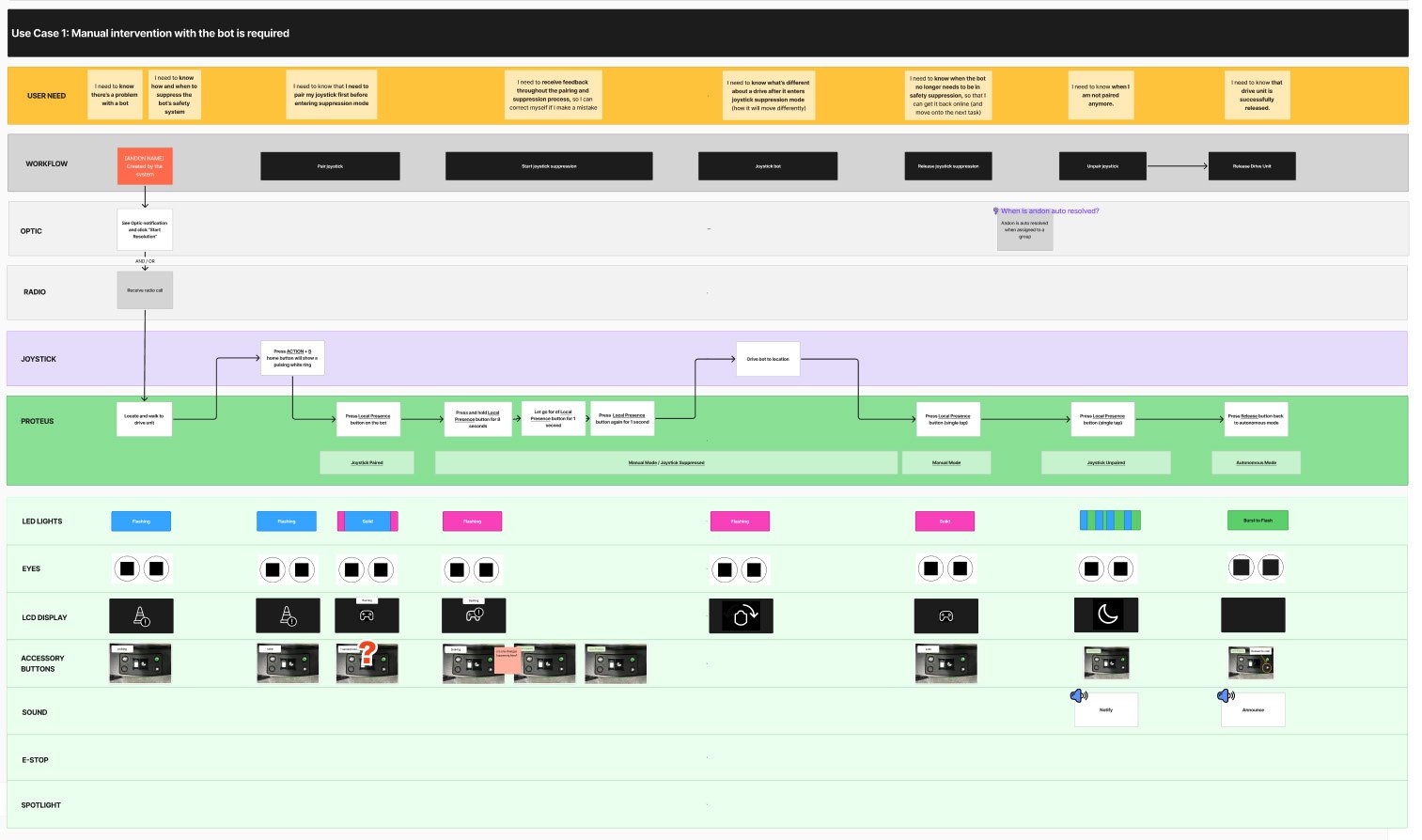

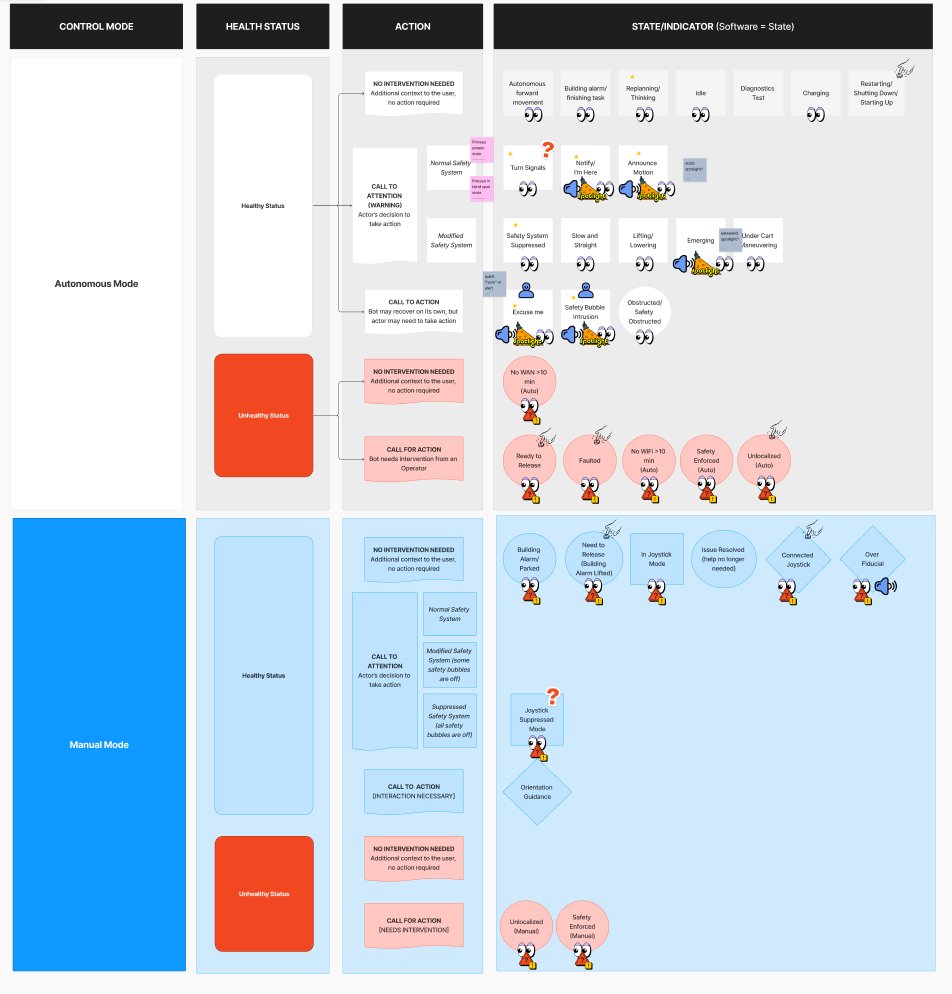

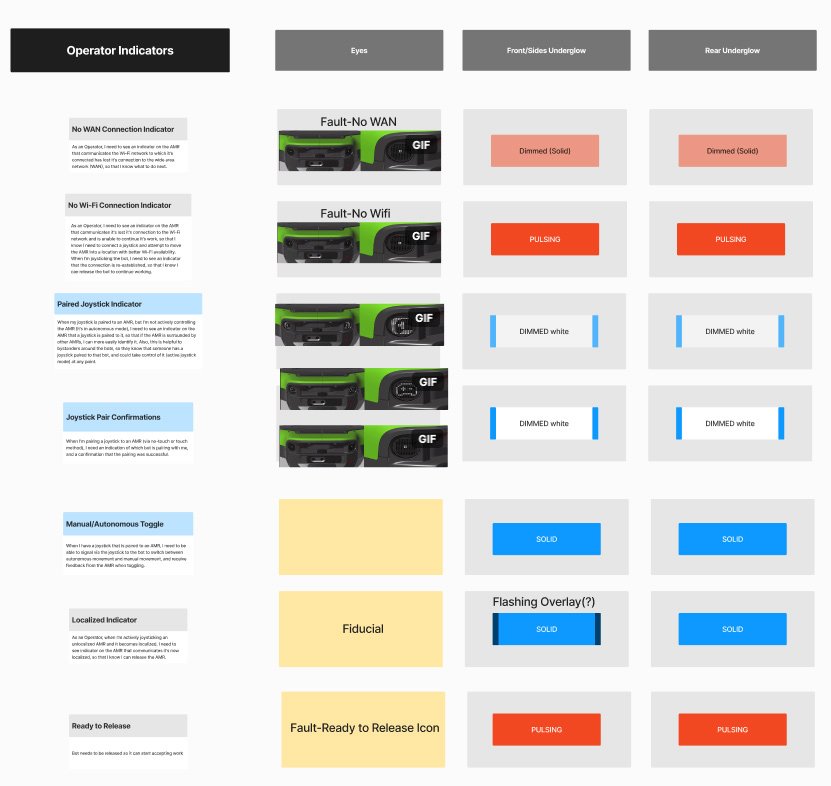

Proteus — Amazon's first autonomous drive unit — is a collaborative robot that transports carts of customer packages from collection chutes to outbound truck loading. I led the operator-facing UX for Proteus: the on-robot indicators, the floor-monitor resolution workflows, and the HRI framework guiding the Gen2 hardware redesign.

Role

Senior UX Lead — HRI framework + Gen2 hardware UX

Team

Hardware, robotics SW, ops, program

Timeline

2024 – Present · Gen2 in Alpha